AI transparency isn’t optional anymore - it’s expected. Customers demand to know how AI-powered customer service decisions are made, and regulations like the EU AI Act enforce this. Transparent AI systems, often called "glass box" models, explain their decision-making processes, unlike "black box" systems that keep users in the dark. This shift is reshaping customer support.

Here’s why transparency matters:

- Trust: 95% of consumers want to understand why AI makes certain decisions. Clear explanations build credibility and loyalty.

- Compliance: New laws require companies to disclose how AI works. Non-compliance can lead to fines as high as €35 million.

- Bias Detection: Transparent systems help identify and fix biases, ensuring fair treatment for all customers.

- Performance Monitoring: Visibility into AI processes speeds up issue resolution and improves reliability.

- Employee Confidence: Explainable AI empowers support teams to validate and improve AI outputs.

- Customer Decisions: Clear AI recommendations help users make informed choices, reducing frustration.

- Reputation: Transparent brands stand out, while opaque systems risk losing customer trust.

The takeaway? Transparency isn’t just about meeting legal requirements - it’s about creating trust, improving performance, and strengthening customer relationships.

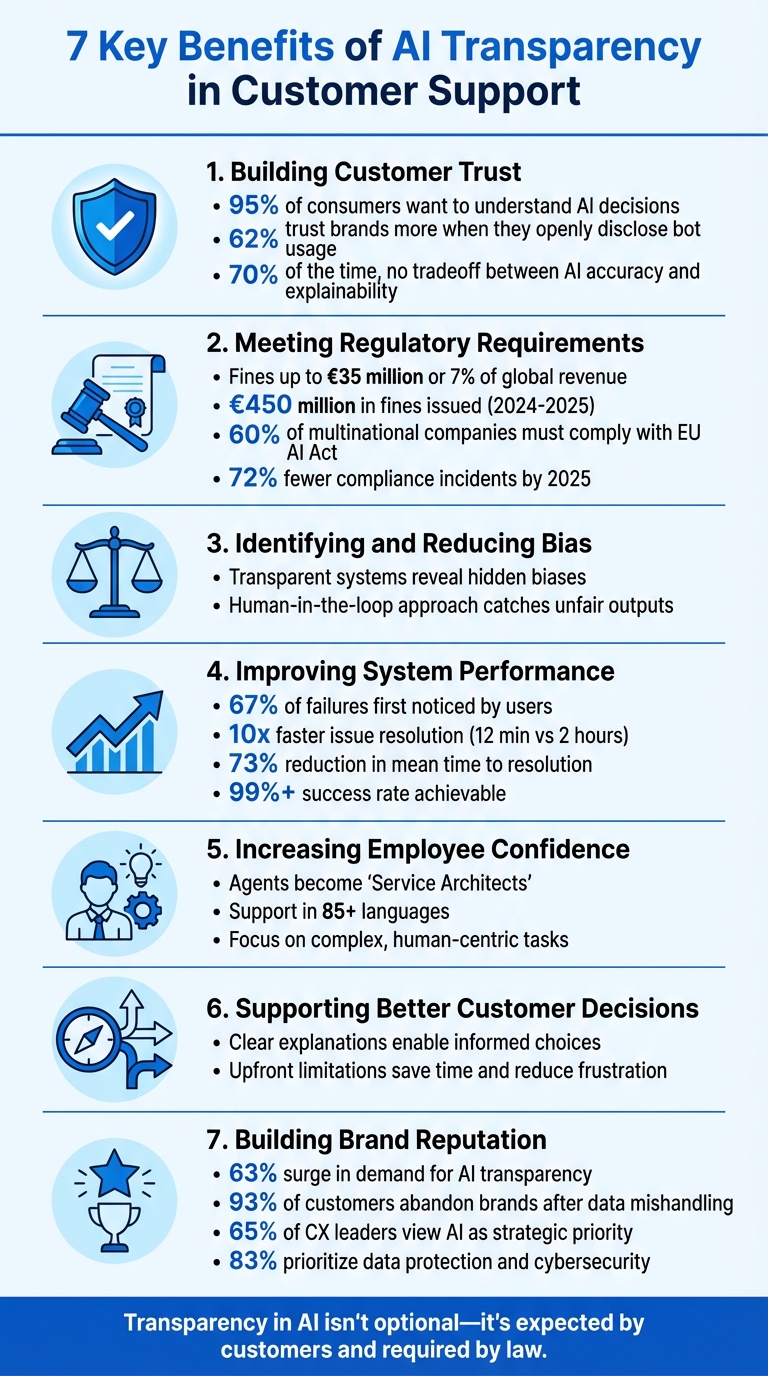

7 Key Benefits of AI Transparency in Customer Support: Statistics and Impact

1. Building Customer Trust

Being upfront about how AI works is a game-changer for trust. When brands provide clear explanations - even for outcomes customers might not like - it shows respect and builds credibility. In fact, 62% of consumers say they trust a brand more when it openly discloses the use of bots rather than hiding it [6]. Honest communication like this avoids feelings of deception and strengthens long-term loyalty.

The move from "black box" AI to "glass box" AI is reshaping how people view automation. Instead of seeing it as a mysterious, independent force, customers start to see it as a tool guided by human oversight, working in their best interest. This shift helps reduce skepticism and uncertainty. As Luke Cuthbertson from Route 101 explains:

"Accuracy builds credibility, explanation builds trust." [3]

Clarity is key. When customers understand the reasoning behind AI-driven recommendations, they’re more likely to accept them - even if the suggestion isn’t exactly what they wanted. Interestingly, research shows that 70% of the time, there’s no tradeoff between AI accuracy and explainability [5]. In other words, you can deliver both precision and transparency without sacrificing one for the other.

Explainable AI (XAI) takes this a step further by allowing customers and support agents to verify AI-generated suggestions in real-time. For example, instead of simply recommending a product, a transparent system might explain: "We’re suggesting this based on your positive reviews for similar items." By giving visibility into the data and algorithms behind decisions, customers feel reassured that the system is fair and free from hidden biases [5][1].

To make this trust tangible, brands should follow an AI customer support implementation guide. Clearly disclose when AI is involved using simple, jargon-free language. Visual aids can also help by breaking down key data points behind recommendations. When customers see the "why" behind decisions, they feel more confident and are more likely to stay engaged. Not only does this transparency build trust, but it also sets the stage for meeting regulatory requirements effectively.

2. Meeting Regulatory Requirements

Transparency isn’t just a choice anymore - it’s a legal requirement. Regulations like the EU AI Act and California's AB 2013 mandate that businesses clearly document how their AI systems make decisions. Without proper audit trails, companies risk steep penalties. For example, the EU AI Act allows fines reaching up to €35 million or 7% of global annual turnover for serious violations [7]. In fact, between 2024 and 2025, EU data protection authorities issued €450 million in fines related to AI transparency breaches [7].

AI providers have until August 2, 2025, to meet specific technical documentation standards or face fines of up to 3% of global revenue [7]. And because the EU AI Act applies to any AI system impacting users within the EU, roughly 60% of multinational companies - regardless of where they’re headquartered - must comply [7].

Thorough documentation doesn’t just mitigate legal risks; it builds accountability. This involves tracking every decision, update, and approval, while recording who made changes, when, and why [9]. Companies that integrated traceability mechanisms directly into their AI processes reported substantial improvements in regulatory compliance. By 2025, 72% of these organizations saw fewer compliance-related incidents [9]. Jamie Dimon, CEO of JPMorgan Chase, captured the essence of this shift when he said:

"Opaque decision-making cannot stay in place in credit scoring and trading systems." [8]

This growing demand for accountability is reshaping AI systems. Nearly half of finance leaders now require full auditability of every AI decision before approving new projects [8]. On top of that, automated audit trails can cut manual compliance work by as much as 60% [7]. Tools like immutable logging systems, including blockchain-based solutions, ensure tamper-proof records for auditors [9]. Beyond meeting legal obligations, this level of documentation reinforces ethical practices and strengthens trust. It also plays a key role in addressing bias, which ties into the next benefit.

3. Identifying and Reducing Bias

AI systems can unintentionally treat some customer groups unfairly due to flawed training data or hidden patterns. Without visibility into how these systems work, biases often go unnoticed, leading to unequal treatment in customer support or even restricted access to services. When businesses gain a clear understanding of their AI's decision-making, they can spot and resolve these problems before they negatively impact customers. This clarity helps create actionable ways to detect and address bias effectively.

Making AI decision-making processes visible is key to addressing bias. Transparency allows companies to identify where discrimination happens and take steps to ensure all customer groups are treated fairly.

Reducing bias starts with practical steps like assembling diverse testing teams and routinely auditing training data. Many companies are also narrowing the scope of AI tasks to specific areas - such as billing questions or product troubleshooting - where ethical concerns are easier to manage. Tools like IBM's AI Fairness 360 and Google's Fairness Indicators can highlight disparities across demographic groups. Adding human oversight through feedback loops, where customers report incorrect or unfair responses, creates a "human-in-the-loop" approach. This allows agents to quickly correct biased outputs [[10]](https://www.zendesk.com/blog/7-ways-reduce-bias-conversational AI). These efforts not only minimize bias but also improve the overall performance of AI systems.

4. Improving System Performance

When AI systems operate like a "black box", delays in identifying issues are inevitable. In fact, 67% of failures are first noticed by users, which can erode trust and customer satisfaction [12]. By introducing transparency, developers can understand how outputs are generated, making it easier to pinpoint exactly where things go wrong. This level of visibility not only helps address current issues but also lays the groundwork for advanced performance monitoring.

One key advantage of transparency is the ability to catch subtle performance declines, often referred to as "agent drift." These gradual changes might not trigger traditional error alerts but can still impact system reliability over time [11]. Tools like OpenTelemetry provide comprehensive traceability, significantly speeding up debugging. For example, teams with full observability can resolve issues 10 times faster - 12 minutes compared to 2 hours [12]. Additionally, systems using distributed tracing have been shown to cut mean time to resolution (MTTR) by 73% [12]. This level of insight allows support managers to understand why an AI system might defer to a human agent, whether due to low confidence, a policy limitation, or a gap in knowledge. With this information, they can fine-tune the system's behavior, directly improving customer trust and satisfaction.

Transparent systems also enable proactive measures to prevent issues from affecting users. Verification pipelines, for instance, can assess AI outputs in real-time. If the system's confidence dips below an acceptable threshold, it can automatically repair or regenerate outputs [11]. This approach identifies problems that traditional monitoring might overlook entirely. For businesses leveraging platforms like ChatSpark (https://chatspark.io), this means achieving and maintaining a success rate of over 99% while keeping response latency at p95 under 3 seconds [12]. These benchmarks are critical for ensuring a seamless customer experience.

5. Increasing Employee Confidence

When employees understand how AI operates, they feel empowered instead of displaced. Explainable AI (XAI) plays a key role here by offering clear reasoning behind each recommendation. This allows agents to review and validate AI-generated responses before sharing them with customers. By providing this level of transparency, XAI helps agents feel more in control, enabling them to confidently verify and communicate AI-driven answers.

This understanding significantly impacts decision-making. When agents grasp the "why" behind an AI suggestion, they can determine the best course of action - whether that’s delivering the response directly, asking additional questions, or escalating the issue to a specialist. As Luke Cuthbertson, Head of CX Consulting Practice at Route 101, puts it:

"Understanding the reasoning behind any response is critical in identifying the right path forward... It's clarity that drives trust and loyalty in CX engagements" [3].

Transparency also tackles the skepticism that often surrounds new technology. Transitioning from opaque systems to more transparent "glass box" models reassures employees that AI responses are grounded in verified policies rather than random statistical calculations or errors. Candace Marshall, Vice President of Product Marketing for AI and Automation at Zendesk, emphasizes:

"Transparency in AI... will help to increase trust and usability" [1].

This trust enables agents to focus on tasks that genuinely require human expertise, such as solving complex problems and building customer relationships.

Take platforms like ChatSpark (https://chatspark.io), for example. These tools allow support teams to confidently rely on AI to handle routine inquiries in over 85 languages, freeing agents to concentrate on more impactful interactions. When agents understand the system’s logic, they shift into the role of "Service Architects", overseeing and improving AI performance rather than merely reacting to its outputs. Feedback loops further enhance this dynamic, letting agents report issues and contribute to system refinements. This growing confidence among employees not only boosts system performance but also leads to better customer outcomes.

6. Supporting Better Customer Decisions

When customers understand why an AI system suggests a particular action, they can make more informed choices about their next steps. This approach shifts customer support from being a simple exchange of information to a collaborative problem-solving experience. For example, when an AI explains its reasoning - like saying, "We're recommending this product based on your positive reviews for similar items" - customers can determine whether the suggestion meets their needs or if they need to ask further questions. This level of clarity not only helps guide decisions but also avoids unnecessary delays in resolving issues.

Being upfront about limitations also saves time and minimizes frustration. If a system clarifies its capabilities - such as, "I can track your order status but cannot process refunds" [2] - customers can decide whether to continue with the bot or seek help from a human representative. This transparency in the "resolution path" gives customers the confidence to either proceed, ask more questions, or escalate the issue as needed.

The importance of clear communication is even greater in regulated industries. Take the case from February 2024, when the Civil Resolution Tribunal of British Columbia ordered Air Canada to refund a passenger after its chatbot provided misleading information about bereavement fares [2]. This incident underscores how accurate and transparent AI communication can directly influence customer decisions. As Luke Cuthbertson, Head of CX Consulting Practice at Route 101, points out:

"Understanding the reasoning behind any response is critical in identifying the right path forward, be it asking additional questions, seeking additional support for more complex issues, or feeling confident in moving forward with the answer given" [3].

Tools like ChatSpark (https://chatspark.io) showcase this principle by delivering quick, consistent responses across various channels while clearly outlining what the system can and cannot do. When customers receive straightforward explanations about data handling and decision-making processes, they feel more confident sharing information and trusting the guidance provided. Whether troubleshooting an issue or exploring product options, this clarity strengthens trust and improves efficiency in customer support.

7. Building Brand Reputation

Transparency in AI customer support has become more than just a nice-to-have - it’s now a key differentiator. Companies that clearly explain how their AI systems work are gaining ground over those that rely on opaque, "black box" approaches. In fact, the demand for AI transparency has surged by 63% in the past year alone [3]. When businesses treat their AI systems with the same accountability as they do human employees, they foster deeper trust with their customers.

The risks of ignoring this shift are significant. A staggering 93% of customers will abandon a brand if they suspect their personal data has been mishandled [13]. This underscores the importance of prioritizing ethical practices over shortcuts. As Rebekah Carter from CX Today puts it:

"Trust can't survive on good intentions. It's built when companies publish standards, show evidence, and make failure survivable" [13].

Forward-thinking companies are already taking steps to lead in this area. Many have introduced "Trust Pages", which publicly detail their AI systems' behavior, data policies, and human oversight measures [13]. These pages act as a kind of safety manual, offering customers a clear understanding of what the AI can and cannot do, as well as how mistakes are addressed. This approach not only reinforces ethical AI practices but also builds on the trust and compliance benefits discussed earlier. By being upfront and transparent, these brands position themselves as leaders in responsible AI use.

Transparency also serves as a shield against reputational harm. AI systems equipped with clear escalation paths and human oversight mechanisms can prevent minor errors from spiraling into major trust issues [13][4]. When customers know they can easily reach a human agent to resolve automated decisions, they’re more likely to stay loyal - even after a mistake. For example, ChatSpark (https://chatspark.io) clearly communicates its system's capabilities and guarantees that customers can escalate issues to a human if needed. This proactive approach not only manages reputation but also reinforces trust and accountability.

Experts in the field highlight the broader benefits of this transparency. Tomas Gear, a Forward Deployed Engineer at Parloa, emphasizes:

"Transparency is what makes [trust] possible. It creates a shared language between CX and compliance and turns governance from a blocker into an enabler" [4].

With 65% of CX leaders now viewing AI as a strategic priority rather than a passing trend [1], brands that embrace transparency are doing more than meeting expectations - they’re carving out reputations as ethical, trustworthy leaders in customer experience.

How to Implement Transparency

Making transparency a core part of AI customer support requires ongoing effort - from sourcing data to monitoring performance after deployment. Every step should include clear documentation and accountability. These practices not only help meet regulations but also strengthen trust and improve overall performance.

Start with proactive disclosure at every customer interaction. Use straightforward language, like: "Hi, I'm a bot! I'm here to help", and explain its role, such as "to help you look up your order faster." Always offer an easy way for customers to switch to a human agent if needed.

Regularly perform red teaming exercises and bias audits to identify vulnerabilities early. Keep a detailed change log for all AI updates. Use tools like model cards to summarize the AI's capabilities, limitations, and training data. As Brandon Tidd, Lead Zendesk Architect at 729 Solutions, emphasizes:

"CX leaders must critically think about their entry and exit points and actively workshop scenarios wherein a bad actor may attempt to compromise your systems" [14].

Platforms designed with transparency in mind can simplify this process. For example, ChatSpark (https://chatspark.io) automatically tracks over 15 performance metrics and provides monthly ROI reports. These reports outline AI resolution rates, cost savings, and knowledge gaps [15]. Features like "Unanswered Questions" help teams pinpoint areas for improvement each week, making transparency a practical tool for refining operations.

The data highlights why this approach matters: 75% of businesses believe a lack of transparency could lead to higher customer churn, and 83% of CX leaders now rank data protection and cybersecurity as top priorities [14]. Steph Lundberg, a customer support professional, underscores this point:

"Implementation is just the tip of the AI iceberg, and you can't focus on implementation until you've taken the steps to understand the AI systems you're using" [2].

Conclusion

Transparency in AI customer support goes beyond being a technical requirement - it’s a cornerstone for building trust and driving growth. With 95% of customers expecting clarity in their interactions [3], the advantages of transparency are undeniable: it strengthens trust, reduces legal risks, enhances performance, and safeguards brand reputation. As Luke Cuthbertson, Head of CX Consulting Practice at Route 101, aptly states:

"Knowledge is power, but it's clarity that drives trust and loyalty in CX engagements" [3].

The urgency for transparency is growing. Customer demands for AI clarity have surged by 63% in 2026 compared to the previous year [3]. This shift signals a transformation in how people perceive automation - not as an enigmatic system, but as a tool they deserve to understand and question.

This evolving landscape presents a clear opportunity for businesses to gain a competitive edge. By auditing AI systems, addressing biases proactively, and maintaining detailed documentation, companies can empower both customers and employees. Turning AI from a "black box" into a "glass box" ensures informed decision-making and fosters trust.

At ChatSpark, we are fully aligned with this vision. With 83% of CX leaders prioritizing data protection and cybersecurity [1], the message is clear. As highlighted in the Zendesk CX Trends Report:

"Being transparent about the data that drives AI models and their decisions will be a defining element in building and maintaining trust with customers" [1].

Our commitment at ChatSpark is to embed transparency into every interaction, ensuring clarity and trust remain at the forefront. Businesses that recognize transparency as a strategic advantage will not only meet rising expectations but also deliver exceptional customer experiences. Clear and accountable AI is no longer optional - it’s the foundation for trusted and competitive customer support.

FAQs

What makes an AI support bot “transparent”?

An AI support bot is considered transparent when it clearly explains how it makes decisions, processes data, and uses information. Being upfront about these processes helps build trust and ensures users have a better understanding of its inner workings.

How can we prove AI decisions for audits and compliance?

Transparency is key when validating AI decisions for audits and compliance. By maintaining detailed logs of activities, decision-making processes, and data usage, organizations can create a system that’s ready for audits. These logs serve as evidence of accountability, showing how decisions align with both ethical guidelines and legal requirements.

What should customers see when AI can’t help?

When AI falls short, it's crucial to provide customers with clear and honest communication about the limitations of the issue. This kind of transparency builds trust and reassures customers that they are being kept in the loop about next steps or possible alternative solutions.